Stop AI Hallucinations Before They Hurt Your Business: A Faster, Cheaper Method

Your AI chatbot sounds confident. Even when it's wrong.

You deploy a chatbot to handle customer service. It gives a wrong answer about your return policy. A customer gets angry. You lose the sale and damage your brand.

Or you use AI to summarize a market report. It invents a key statistic. Your team makes a bad decision based on false information.

These mistakes are called AI "hallucinations." They happen all the time. The real problem? Current tools to catch them are too slow and expensive. They require running the AI multiple times or adding complex software. This kills real-time applications.

You need a way to know which answers to trust. And you need it to be fast and cheap.

What Researchers Discovered

A team of researchers found a smarter, faster way to measure an AI's uncertainty. Their method is called Semantic Token Clustering for Efficient Uncertainty Quantification. You can read the full paper here: Semantic Token Clustering for Efficient Uncertainty Quantification in Large Language Models.

Think of it like this. When an AI answers a question, it doesn't just pick one word. It considers many possible words that could fit.

For example, if the answer is about a television, the AI might consider the words "TV," "television," "telly," or "screen."

The new method looks at all these synonyms—words with similar meanings. It groups them together. Then it measures how confident the AI is about the entire group of meanings.

If the AI's confidence is spread thinly across many similar words, its answer is less trustworthy. If its confidence is focused on one tight group, the answer is more reliable.

This gives you a "trust score" for every AI response. The breakthrough? It calculates this score in one single pass of the AI. You don't need to run the model multiple times.

Why This Matters for Your Business

- It's 98% faster. Compared to the best existing accuracy-checking methods, this cuts extra processing time by an average of 98%. Speed is no longer a barrier.

- It works out of the box. You don't need special training, extra data, or another AI model to check the first one. It's like a plugin for your existing AI.

- It's just as accurate. Tests across multiple AI models show it performs on par with the slower, gold-standard methods. You don't sacrifice reliability for speed.

This makes real-time "trust scoring" viable. You can finally use AI safely in live chat, data processing, and other high-volume tasks.

How to Apply This Today

You can start building a safety net for your AI applications immediately. Here are five concrete steps to implement this week.

Prerequisite: This method works with "open" AI models where you can access their internal workings. This includes popular models like Meta's Llama 3, Mistral AI's models, and other open-source options. It does not currently work with closed "black-box" APIs like OpenAI's GPT-4.

Step 1: Choose Your Implementation Path

You have two main options:

- Use the Research Code: The authors have likely released code with their paper. Check the arXiv page for links to a GitHub repository. This is the most direct path.

- Leverage a Framework: Watch for this technique to be integrated into popular AI toolkits like LMQL, Outlines, or vLLM. These frameworks make it easier to apply advanced sampling and scoring techniques.

Action this week: Clone the research repository or identify the framework you'll use. Set up a simple test environment.

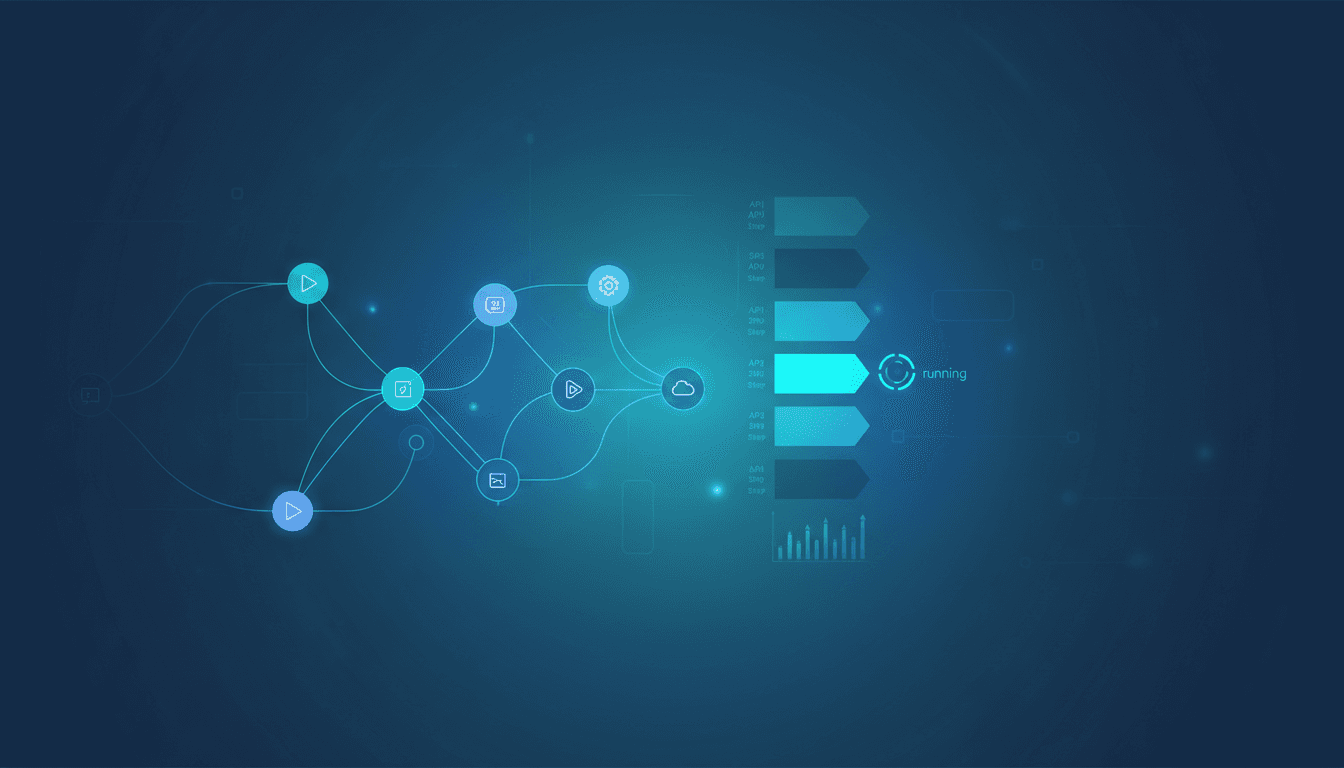

Step 2: Integrate with Your Model Loader

The method needs access to the AI's "logits"—the raw scores it assigns to every possible next word. Most model-loading libraries provide this.

If you're using Hugging Face's transformers library, you can access these scores during generation. You will modify your text generation pipeline to not just get the final text, but also collect the model's internal confidence scores for each step.

For example: Instead of just calling model.generate(), you'll write a custom generation loop that saves the logit scores for each "token" (word piece) the model produces.

Step 3: Implement the Clustering Logic

This is the core of the method. For each position in the AI's answer, you will:

- Take the model's predicted scores for the next word.

- Use the AI's own built-in understanding of word meanings (its "embedding" space) to find words that are semantically similar.

- Cluster those similar words together.

- Calculate a confidence score based on how the probability mass is distributed across clusters.

Tool to use: For the clustering step, you can use a fast, standard algorithm like Agglomerative Clustering from the scikit-learn library. The research paper provides the specific formula for calculating the final uncertainty score from these clusters.

Step 4: Set Confidence Thresholds & Create Actions

The method outputs a score. You must decide what to do with it.

- For a Customer Service Chatbot: Any answer with an uncertainty score above a set threshold is flagged. It gets routed to a human agent for review before being sent to the customer.

- For an Internal Research Tool: Answers come with a confidence label: "High Confidence," "Medium - Verify," or "Low Confidence - Likely Incorrect."

Start simple: In your test, set a threshold that catches 90% of known wrong answers. Adjust based on your tolerance for risk versus human review cost.

Step 5: Deploy a Pilot in a Controlled Area

Don't roll this out to all customers at once. Choose a low-risk, high-value application for a two-week pilot.

Good pilot candidates:

- An internal chatbot that answers HR policy questions.

- A tool that summarizes customer feedback tickets.

- A first-level filter for email support where all outputs are reviewed by a human.

Measure two things during the pilot:

- Accuracy Improvement: How many fewer wrong answers go unchecked?

- Performance Impact: What is the added latency (delay) to your AI responses? It should be minimal—under 100 milliseconds.

What to Watch Out For

This method is powerful, but it has limits. Knowing them helps you use it correctly.

- Closed Models Are a No-Go. It only works with AI models where you can see the internal confidence scores. You cannot use it directly with most paid API services (like GPT-4, Claude, or Gemini) because they don't expose this data. Your option there is to use their built-in confidence features if available.

- Word Meaning Mix-Ups. The method uses the AI's own sense of word meanings. Sometimes it groups words that are spelled the same but mean different things (like "bank" for money and "bank" of a river). This can add a little noise to the confidence score. It's still highly effective, but not perfect.

- Scores Are Relative, Not Absolute. The output is a "trust score," not a precise probability like "92% chance this is correct." It's excellent for ranking answers by reliability and flagging low-confidence ones. Don't treat it as a perfect statistical probability.

Your Next Move

Start by testing one open-source model.

This week, take a model you already use or experiment with Llama 3 (via Hugging Face). Implement a basic version of this scoring system on a single, specific task. See how the confidence scores correlate with actual answer quality.

This isn't about a full production rollout yet. It's about proving to yourself that a simple, fast check can identify your AI's weak spots. Once you see it working, you can design the safety net your business needs.

What's the first AI task in your company where a wrong answer causes the most pain? Could a real-time confidence score have prevented it?

Comments

Loading...